はじめに

前回は anomalib をカスタムデータで学習させる方法について説明しました。

今回は、推論プログラムを使いやすいように変更していきます。

前提条件

前提条件は以下の通りです。

- torch == 1.12.1+cu113

- pytorch_lightning == 1.9.5

推論の前に

カメラ映像を OpenCV で読み取り、推論するためには pytorch_lightning ではなく、torch を使用する必要があります。

pytorch_lightning は、推論するためにデータローダーを構築する必要があるので、今回は使用しません。

torch モデルを学習

まずは、src/anomalib/models/padim/config.yaml を編集します。

dataset:

name: custom

format: folder

path: ./datasets/MVTec/custom

# category: custom

normal_dir: train/good

normal_test_dir: test/good

abnormal_dir: test/defect

mask_dir: null

extensions: null

# task: segmentation

task: classification

train_batch_size: 8

eval_batch_size: 8

num_workers: 2

image_size: 256 # dimensions to which images are resized (mandatory)

center_crop: null # dimensions to which images are center-cropped after resizing (optional)

normalization: imagenet # data distribution to which the images will be normalized: [none, imagenet]

transform_config:

train: null

eval: null

test_split_mode: from_dir # options: [from_dir, synthetic]

test_split_ratio: 0.1 # fraction of train images held out testing (usage depends on test_split_mode)

val_split_mode: same_as_test # options: [same_as_test, from_test, synthetic]

val_split_ratio: 0.1 # fraction of train/test images held out for validation (usage depends on val_split_mode)

tiling:

apply: false

tile_size: null

stride: null

remove_border_count: 0

use_random_tiling: False

random_tile_count: 16

model:

name: padim

# backbone: resnet18

backbone: wide_resnet50_2

pre_trained: true

layers:

- layer1

- layer2

- layer3

normalization_method: min_max # options: [none, min_max, cdf]

metrics:

image:

- F1Score

- AUROC

pixel:

- F1Score

- AUROC

threshold:

method: adaptive #options: [adaptive, manual]

manual_image: null

manual_pixel: null

visualization:

show_images: False # show images on the screen

save_images: True # save images to the file system

log_images: True # log images to the available loggers (if any)

image_save_path: null # path to which images will be saved

mode: full # options: ["full", "simple"]

project:

seed: 42

path: ./results

logging:

logger: [] # options: [comet, tensorboard, wandb, csv] or combinations.

log_graph: false # Logs the model graph to respective logger.

optimization:

# export_mode: null # options: torch, onnx, openvino

export_mode: torch

# PL Trainer Args. Don't add extra parameter here.

trainer:

enable_checkpointing: true

default_root_dir: null

gradient_clip_val: 0

gradient_clip_algorithm: norm

num_nodes: 1

devices: 1

enable_progress_bar: true

overfit_batches: 0.0

track_grad_norm: -1

check_val_every_n_epoch: 1 # Don't validate before extracting features.

fast_dev_run: false

accumulate_grad_batches: 1

max_epochs: 1

min_epochs: null

max_steps: -1

min_steps: null

max_time: null

limit_train_batches: 1.0

limit_val_batches: 1.0

limit_test_batches: 1.0

limit_predict_batches: 1.0

val_check_interval: 1.0 # Don't validate before extracting features.

log_every_n_steps: 50

accelerator: cpu # <"cpu", "gpu", "tpu", "ipu", "hpu", "auto">

strategy: null

sync_batchnorm: false

precision: 32

enable_model_summary: true

num_sanity_val_steps: 0

profiler: null

benchmark: false

deterministic: false

reload_dataloaders_every_n_epochs: 0

auto_lr_find: false

replace_sampler_ddp: true

detect_anomaly: false

auto_scale_batch_size: false

plugins: null

move_metrics_to_cpu: false

multiple_trainloader_mode: max_size_cycle

74 行目の export_mode を null から torch へ変更しました。

学習コマンド

学習コマンドは同じです。

python .\tools\train.py --model padim推論コマンド

python tools/inference/torch_inference.py \

--config src/anomalib/models/padim/config.yaml \

--weights results/padim/custom/run/weights/torch/model.pt \

--input ./datasets/MVTec/custom/test/defect/1.png \

--output results/padim/custom/test/1_result.png \

--device cpu \

--task classification \

--show False \

--visualization_mode simple上記を実行すると、pytorch_lightning と同じ結果が得られます。

推論プログラムの作成

torch_inference.py をコピーして custom_inference.py とします。

"""Anomalib Torch Inferencer Script.

This script performs torch inference by reading model weights

from command line, and show the visualization results.

"""

# Copyright (C) 2022 Intel Corporation

# SPDX-License-Identifier: Apache-2.0

from argparse import ArgumentParser, Namespace

import cv2

import torch

from anomalib.deploy import TorchInferencer

# from anomalib.post_processing import Visualizer

def infer(args: Namespace) -> None:

"""Infer predictions.

Show/save the output if path is to an image. If the path is a directory, go over each image in the directory.

Args:

args (Namespace): The arguments from the command line.

"""

torch.set_grad_enabled(False)

# Create the inferencer and visualizer.

inferencer = TorchInferencer(path=args.weights, device=args.device)

# visualizer = Visualizer(mode=args.visualization_mode, task=args.task)

image = cv2.imread(args.input)

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

predictions = inferencer.predict(image=image)

print("pred_score: ", predictions.pred_score, "pred_label: ", predictions.pred_label)

# output = visualizer.visualize_image(predictions)

# if args.output:

# file_path = generate_output_image_filename(input_path=args.input, output_path=args.output)

# visualizer.save(file_path=file_path, image=output)

# Show the image in case the flag is set by the user.

if args.show:

# visualizer.show(title="Output Image", image=output)

image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR)

cv2.imshow("frame", image)

cv2.waitKey(0)

cv2.destroyAllWindows()

if __name__ == "__main__":

args = ArgumentParser().parse_args()

args.input = "./datasets/MVTec/custom/test/defect/1.png"

args.config = "src/anomalib/models/padim/config.yaml"

args.weights = "results/padim/custom/run/weights/torch/model.pt"

# args.output = "results/padim/custom/test/1_result.png"

args.device = "cpu"

args.show = True

args.visualization_mode = "simple"

args.task = "classification"

infer(args=args)

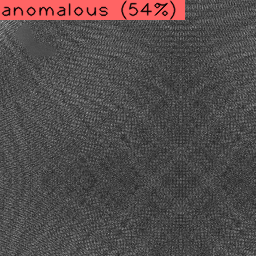

上記を実行すると、以下の出力が得られます。

pred_score: 0.5353217090536091 pred_label: Anomalousおわりに

今回は anomalib の推論プログラムを変更しました。

これでカメラから取得したデータをそのまま推論し、結果を数値で得ることができるようになりました。

コメント